The average sales representative spends sixty-five percent of their time on non-selling activities, according to Salesforce's State of Sales report. Of the remaining thirty-five percent, much is spent without adequate competitive intelligence, objection handling frameworks, or proof points. The sales team knows the product. They do not always know the buyer. And the materials that product marketing provides, when they arrive at all, are often too generic, too late, or too disconnected from the competitive reality on the ground.

The gap is not a skills problem. It is a research problem. Building a genuinely useful battlecard requires knowing what buyers actually say when comparing you to a competitor. Writing an objection handling guide requires knowing which objections arise most frequently and why. Constructing a pitch narrative requires understanding the buyer's emotional journey from problem awareness to purchase confidence. All of this requires data from buyers, not assumptions from internal teams.

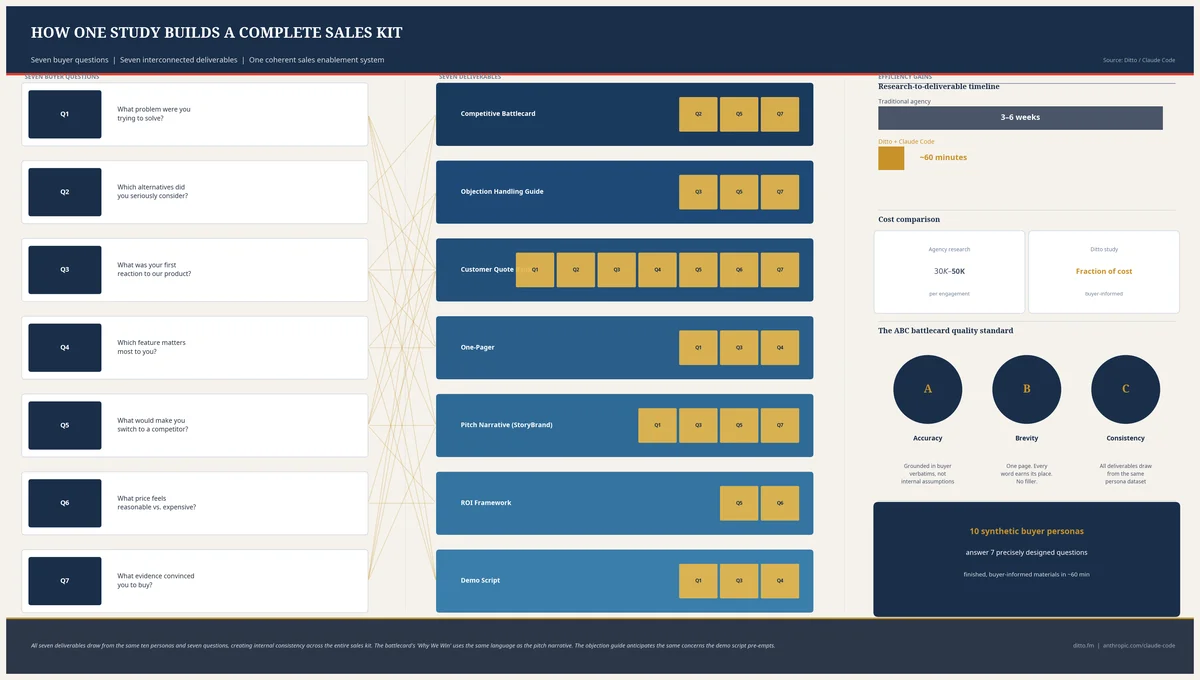

One Ditto study, orchestrated through Claude Code, Anthropic's agentic development environment, produces seven complete sales enablement deliverables in approximately sixty minutes. Not drafts. Not outlines. Finished, buyer-informed materials drawn from ten synthetic personas answering seven precisely designed questions. The sales enablement guide walks through the full workflow, from study design to deliverable generation.

The Sales Enablement Gap

Forrester estimates that sixty to seventy percent of B2B content created by marketing goes entirely unused by sales. Forrester's explanation is straightforward: the content does not answer the questions that arise in actual sales conversations. It speaks to the market in aggregate rather than to the buyer in the room. A beautifully designed product overview deck does not help a rep who has just been told "your competitor does the same thing for half the price."

SiriusDecisions (now part of Forrester) consistently found that the number one request from sales teams to marketing teams is better competitive intelligence. Not more content. Better intelligence. Reps want to know what the competition is saying, what buyers believe about alternatives, and what evidence will tip the decision. They want specificity, not slogans.

The gap exists not because product marketing teams lack skill but because the research required to build good enablement materials is expensive and slow. Competitive intelligence research typically costs $30,000 to $50,000 from a specialist agency and takes three to six weeks. By the time the deliverables arrive, the competitive landscape has shifted. A new feature has launched. A competitor has changed pricing. A previously unknown entrant has appeared in two deals. The enablement materials, painstakingly researched, are already partially obsolete.

This creates a vicious cycle. Marketing produces generic materials because bespoke research is too expensive. Sales ignores generic materials because they do not help in specific conversations. Marketing interprets the lack of adoption as a distribution problem and builds a content portal. Sales ignores the portal. The sixty to seventy percent waste figure persists.

Seven Deliverables from One Study

The Ditto sales enablement study uses seven questions, each designed to extract specific buyer intelligence. The questions are interconnected: each deliverable draws from multiple questions, and each question feeds multiple deliverables. The result is an integrated kit rather than a collection of standalone documents.

Competitive Battlecard (drawn from Q2, Q5, Q7). The battlecard captures why buyers choose you over alternatives, what would trigger a switch, and what evidence they need. See the dedicated competitive battlecards article for the full battlecard methodology.

Objection Handling Guide (drawn from Q3, Q5, Q7). First reactions reveal scepticism patterns. Switching barriers reveal inertia. Proof requirements reveal what overcomes doubt. Together, they produce a categorised objection library with buyer-tested responses.

Customer Evidence Quote Bank (drawn from all questions). Every verbatim response from the ten personas is catalogued by theme, sentiment, and use case. These are not marketing-polished testimonials. They are the raw language buyers use when describing problems, evaluating solutions, and articulating what matters. Sales teams use them as social proof in conversations: "Here's what other buyers in your position have told us."

One-Pager (drawn from Q1, Q3, Q4). Pain points establish the problem. First reactions identify which value propositions resonate. Urgency language provides the "why now." The one-pager becomes a leave-behind that mirrors the buyer's own language rather than the company's internal jargon.

Pitch Narrative using StoryBrand Framework (drawn from Q1, Q3, Q5, Q7). Donald Miller's StoryBrand framework, powered by actual buyer data rather than internal assumptions. The pitch narrative follows the buyer's emotional arc from problem to resolution.

ROI Framework (drawn from Q5, Q6). Price perception data reveals what buyers consider reasonable, what they consider expensive, and where the value gap lies. Combined with switching trigger data, this produces an ROI model grounded in buyer expectations. For a deeper treatment, see the pricing research article.

Demo Script (drawn from Q1, Q4, Q3). The demo opens with the hero feature buyers care most about, addresses the pain points they described, and pre-empts the scepticism they expressed. It is a conversation guide, not a feature tour.

Each deliverable is internally consistent because all seven draw from the same ten personas answering the same seven questions. The battlecard's "Why We Win" section uses the same language as the pitch narrative's resolution. The objection handling guide anticipates the same concerns the demo script pre-empts. The quote bank provides evidence for every claim in the one-pager. This interconnection is what transforms seven documents into a coherent sales kit.

The Seven Questions

Each question serves a dual purpose: it extracts specific buyer intelligence for the deliverables, and it reveals the buyer's decision-making framework. Claude Code customises the questions with your product details, competitive landscape, and category context, then runs the study through Ditto's API against ten personas.

Q1: Pain Point Discovery

"You're evaluating solutions in [category]. What is the single biggest pain point driving your search?"

This question surfaces three layers of intelligence. First, the pain themes themselves: what problems buyers articulate and how intensely they feel them. Second, their current workflow: the specific processes, tools, and workarounds they use today. Third, the alternative tools they have already considered or rejected. The pain point question establishes the emotional foundation for the entire kit. It tells the sales rep what to lead with in the first sixty seconds of any conversation.

Q2: Competitive Decision Drivers

"Compared to [Competitor A] and [Competitor B], what would make you choose one solution over the others?"

This is the battlecard's primary fuel. Buyers reveal not just their preferences but their decision criteria: the specific attributes they weigh when comparing options. The responses populate the battlecard's "Why We Win" section with actual buyer language, frequency counts (how many of ten personas cited each advantage), and the competitive gaps that create opportunity. A strong battlecard does not claim superiority. It demonstrates it through the buyer's own evaluation framework.

Q3: First Reaction and Scepticism

"If I presented [your product] to you right now, what's your first reaction? What excites you, and what makes you sceptical?"

First reactions are unfiltered. They reveal what resonates immediately (the value propositions worth amplifying) and what triggers doubt (the objections that must be addressed proactively). The excitement themes feed the one-pager's value proposition hierarchy. The scepticism patterns feed the objection handling guide's category structure. Together, they tell the rep what the buyer will probably say before the buyer says it.

Q4: The Hero Feature

"If this product could do ONE thing brilliantly, what would you want that to be?"

Constraint forces clarity. When buyers can choose only one capability, they reveal what actually drives their purchasing decision rather than what would be nice to have. The hero feature becomes the demo's opening moment: the capability you show first, before anything else, because it addresses the buyer's primary need. It also establishes the feature priority for the one-pager and the pitch narrative's "plan" section.

Q5: Switching Triggers and Barriers

"What would make you switch from your current solution? And what's currently blocking you from switching?"

Switching triggers reveal when to attack: the specific conditions, frustrations, or events that make a buyer receptive to change. Switching barriers reveal what to address: the inertia, integration concerns, contractual obligations, or organisational resistance that prevents action. The triggers feed the battlecard's timing intelligence. The barriers feed the objection handling guide's inertia section. The gap between "I want to switch" and "I cannot switch" is precisely where sales enablement creates value, and this question maps that gap explicitly.

Q6: Price Perception

"What would you expect to pay for a solution that solves this problem? At what price would it feel like a steal, and at what price would it feel too expensive?"

Price perception data feeds two deliverables directly. The ROI framework uses the gap between expected price and perceived value to build a quantified justification model. The objection handling guide uses the "too expensive" threshold to prepare price objection responses. When a buyer says "that's too much," the rep needs to know whether the objection is absolute (over budget) or relative (compared to a cheaper alternative). This question provides the data to distinguish between the two.

Q7: Proof Requirements

"What evidence would you need to feel confident recommending this solution to your team or manager?"

The proof question is the closer's best friend. It reveals the specific evidence hierarchy each buyer type demands: case studies, ROI data, peer recommendations, analyst reports, free trials, security certifications, or customer references. The proof type hierarchy feeds the battlecard's closing arguments, the pitch narrative's resolution, and the objection handling guide's evidence-based responses. When a buyer says "I need to see proof," the rep already knows what kind of proof they mean.

The Battlecard: Accuracy, Brevity, Consistency

The competitive battlecard is the single most requested and most frequently discarded piece of sales enablement content. Reps request battlecards because competitive conversations are unavoidable. They discard battlecards because one factual error destroys credibility with the entire sales team. If the card says a competitor lacks a feature and the buyer corrects the rep mid-conversation, the card gets binned. Permanently.

The ABC quality standard, Accuracy, Brevity, Consistency, addresses this directly.

Accuracy means every claim on the card is sourced from buyer responses, not internal opinion. When the card says "7 of 10 buyers cited integration flexibility as the primary reason for choosing us," that is a verifiable data point from the study. When it says "Competitor A is perceived as stronger on reporting," that is what buyers actually reported, not what the product team assumes. Buyer-sourced accuracy is harder to dispute because it describes market perception, not product claims.

Brevity means the card is scannable in under sixty seconds. A rep pulling up a battlecard mid-call needs the answer immediately. The structure that works: Why We Win (top three themes with persona language and frequency), Competitor Strengths with Responses (acknowledging where competitors are perceived as strong and how to reframe), Landmine Questions (questions that expose competitor gaps without sounding adversarial), Quick Dismisses (one to two sentence rebuttals for common competitor claims), Switching Triggers (when to go on the offensive), and Proof Points (what evidence buyers demanded).

Consistency means the battlecard's language matches the pitch narrative, the one-pager, the demo script, and the objection handling guide. When all seven deliverables are generated from the same study, consistency is structural rather than aspirational. The same buyer language appears across all documents because it came from the same ten personas.

The Pitch Narrative: StoryBrand Applied

Donald Miller's StoryBrand framework is one of the most widely adopted narrative structures in B2B sales. Its power lies in its simplicity: the customer is the hero, your brand is the guide, and the story follows a seven-part arc. The difficulty, for most companies, is populating the framework with buyer data rather than internal assumptions. The Ditto study solves this directly.

Character: The hero is a composite buyer drawn from the ten personas. Their demographics, role, industry, and context are specific. They are not "a marketing director." They are "a marketing director at a mid-market SaaS company who manages a team of four, reports to the VP of Growth, and spends three hours every week manually compiling campaign performance reports."

Problem: Three layers, all drawn from Q1 and Q3. The external problem (the tangible pain: "manual reporting takes three hours weekly"). The internal problem (the emotional frustration: "I feel like I'm wasting my team's talent on busywork"). The philosophical problem (the deeper injustice: "skilled professionals shouldn't spend their time copying data between spreadsheets").

Guide: Your brand enters as the guide with empathy and authority. The empathy comes from the study's verbatim language: "We hear this constantly from marketing teams, X of Y buyers told us the same thing." The authority comes from proof: evidence, credentials, and the research itself. Showing the buyer that you have studied their problem signals competence.

Plan: Three clear steps derived from Q4's hero feature and Q3's excitement themes. Step one: the immediate quick win. Step two: the core value realisation. Step three: the expanded benefit. Three steps reduce cognitive load. More than three and the buyer struggles to remember the path forward.

Call to Action: Anchored in Q7's proof type hierarchy. If buyers want case studies, the CTA includes a case study. If they want a free trial, the CTA offers one. The call to action matches the evidence type that the buyer's own peers demanded.

Avoid Failure: Q3's scepticism themes, reframed. Every doubt the buyer expressed is addressed explicitly. "You might be wondering about integration complexity. Here's what other buyers found." The avoid-failure section is not fear-mongering. It is pre-emptive reassurance informed by data.

Success: Q3's excitement themes, amplified. The vision of the buyer's world after adoption, described in the language they used when expressing enthusiasm. The pitch narrative writes itself when you have the data. Without data, it is guesswork dressed in framework language.

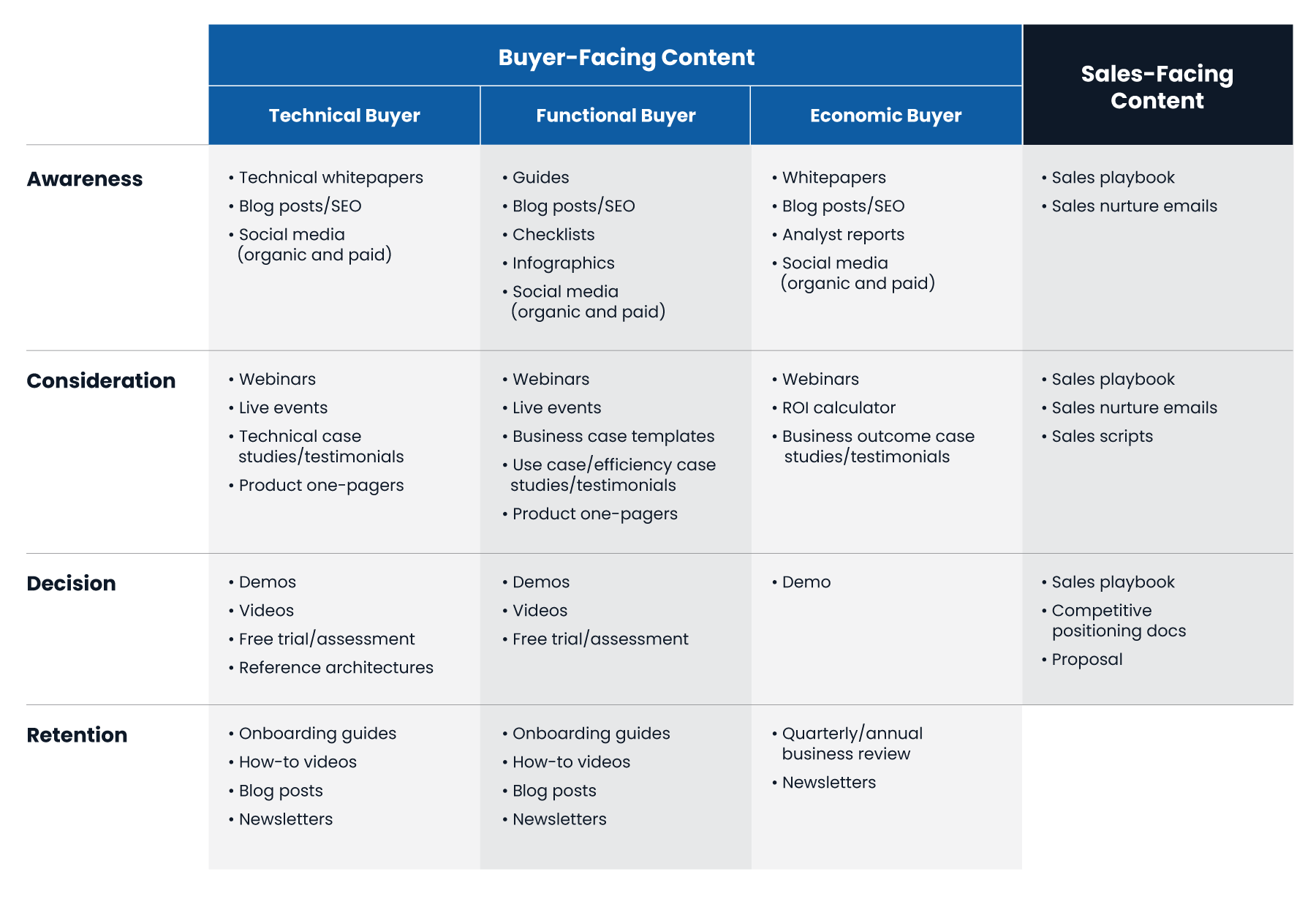

Advanced: Buyer Committee Studies

Enterprise sales rarely involve a single decision-maker. The typical B2B purchasing committee includes three to seven stakeholders, each evaluating the solution through a different lens. The economic buyer cares about ROI and risk. The technical evaluator cares about architecture, security, and integration. The end user cares about usability and daily workflow impact. The executive sponsor cares about strategic alignment and organisational credibility.

A single Ditto study targets one buyer persona. But nothing prevents you from running three or four studies in parallel, each targeting a different member of the buying committee. Same seven questions, persona-specific answers. The result is twenty-eight deliverables in approximately two hours: four battlecards (one per persona), four objection handling guides, four sets of proof requirements, and four pitch narratives.

The practical value is immense. Consider what happens when a sales rep discovers, mid-cycle, that the technical evaluator has concerns about API architecture. Without persona-specific enablement, the rep improvises. With the technical evaluator's battlecard, they have buyer-validated responses to architecture objections, the specific proof types that technical evaluators demand (documentation, sandbox access, architecture diagrams), and the competitive comparison from a technical perspective rather than a business one.

The buyer committee study also reveals alignment and misalignment across roles. If the economic buyer prioritises cost reduction while the end user prioritises ease of use, the sales team needs to navigate that tension explicitly. If the technical evaluator's top objection is security while the executive sponsor's top objection is vendor stability, the proof package needs to address both. These cross-persona insights are invisible in a single-persona study and transformative in a multi-persona one.

Claude Code orchestrates the parallel studies automatically: creating the research groups with role-specific demographic filters, running identical questions against each, and producing a comparative analysis that highlights where personas agree (universal selling points) and where they diverge (persona-specific messaging requirements). The customer segmentation article describes the multi-group comparison methodology in detail.

Where Sales Enablement Fits in the PMM Stack

Sales enablement is the execution layer closest to revenue. It is where every other product marketing function converges into materials that directly influence purchasing decisions. The stack flows as follows:

Customer segmentation identifies who you are selling to. Without segmentation, enablement materials target everyone and resonate with nobody.

Positioning validation establishes how you are positioned against alternatives for your target segment. The positioning informs the battlecard's "Why We Win" and the pitch narrative's guide section.

Messaging testing validates the specific language that resonates. The tested messages become the one-pager's headlines and the demo script's talking points.

Voice of Customer research provides ongoing buyer intelligence. VoC data feeds the quote bank and keeps the objection handling guide current as buyer concerns evolve.

Competitive intelligence produces the detailed battlecards. The sales enablement study generates a rapid battlecard; the dedicated competitive study goes deeper with feature-by-feature comparison and win/loss analysis.

Pricing research informs the ROI framework. The enablement study captures price perception; the dedicated pricing study refines willingness-to-pay with Van Westendorp analysis and conjoint-style trade-offs.

GTM strategy validation determines the channels and motions through which enablement materials are deployed. A PLG motion needs different materials from an enterprise sales motion.

Content marketing engine transforms enablement insights into demand generation content. The quote bank becomes blog posts. The battlecard becomes comparison pages. The pitch narrative becomes the website's core story.

Sales enablement (this article) is where it all comes together. It draws from competitive intelligence, messaging, VoC, and pricing to produce the materials that reps use in conversations with buyers.

Product launch research deploys enablement materials at launch. The battlecard, pitch narrative, and objection handling guide are ready before the first sales call, not weeks after.

The enabling insight is that sales enablement is not a standalone function. It is the synthesis of every other PMM discipline, translated into the specific format a sales representative needs at the moment of buyer engagement. When the upstream research is absent, enablement materials default to internal assumptions. When the upstream research is present, enablement materials reflect the market as it actually is.

Limitations and Where Traditional Methods Still Win

Synthetic personas report what they would do, not what they have done. The Ditto sales enablement kit is best understood as a continuously refreshable baseline: a set of buyer-informed materials that can be produced in an hour and updated monthly as the competitive landscape shifts. It excels at breadth (ten personas, seven questions, seven deliverables) and speed (sixty minutes, not six weeks).

What it cannot replace is deal-specific intelligence. Real win/loss interviews after closed deals, whether won or lost, provide insight that no synthetic study can replicate. "We lost the Acme deal because their CTO insisted on on-premise deployment and our competitor offered it" is a specific, irreplaceable data point. The synthetic study can predict that some technical evaluators will prioritise deployment flexibility. It cannot tell you that the Acme CTO specifically rejected your cloud-only architecture in the final evaluation round.

The recommended approach is layered. Use Ditto for the continuous baseline: produce the seven deliverables, refresh them quarterly, and ensure every rep has buyer-informed materials from day one. Use traditional win/loss interviews for deal-specific learning: what happened in that particular sales cycle, with that particular buyer, against that particular competitor. The synthetic kit tells reps what to expect. The win/loss data tells them what actually happened. Together, they produce an enablement programme that is both broad and deep, both current and historically informed.

Getting Started

If your sales team's competitive response to "why should I choose you over [competitor]" is "we have better customer support," your enablement materials are not doing their job. Better customer support is a claim. Seven of ten buyers told us that integration flexibility was the deciding factor is evidence. The difference between the two is research.

Ditto provides the always-available research panel of over 300,000 synthetic personas. Claude Code handles the orchestration: creating the research group, designing the seven questions with your product context, running the study, extracting intelligence from the responses, and producing the seven deliverables. The sales enablement guide walks through the full workflow, from study design to deliverable generation.

Sixty to seventy percent of B2B content goes unused by sales. The reason is not that marketing produces too little content. It is that marketing produces content without buyer data. One study, seven questions, ten personas, sixty minutes. Seven deliverables that sales will actually use, because they contain what buyers actually said.

The Claude Code and Ditto for Product Marketing Series

This article is part of a series on using Claude Code and Ditto for product marketing. Each article explains a specific workflow; each has a corresponding Claude Code technical guide for hands-on implementation.

Part 1: How to Validate Product Positioning with Claude Code and Ditto | Claude Code Guide

Part 2: How to Build Competitive Battlecards with Claude Code and Ditto | Claude Code Guide

Part 3: How to Research Pricing with Claude Code and Ditto | Claude Code Guide

Part 4: How to Test Product Messaging with Claude Code and Ditto | Claude Code Guide

Part 5: How to Run Voice of Customer Research with Claude Code and Ditto | Claude Code Guide

Part 6: How to Segment Customers with Claude Code and Ditto | Claude Code Guide

Part 7: How to Validate GTM Strategy with Claude Code and Ditto | Claude Code Guide

Part 8: How to Build a Content Marketing Engine with Claude Code and Ditto | Claude Code Guide

Part 9: How to Build Sales Enablement with Claude Code and Ditto (this article) | Claude Code Guide

Part 10: How to Research a Product Launch with Claude Code and Ditto | Claude Code Guide